Yuchen Lin’s Blog

Hey there, this is Yuchen Lin. I’m sharing my blog posts in this notion-based site, and please feel free to contact me if you have any comments or questions! 😃

Posts

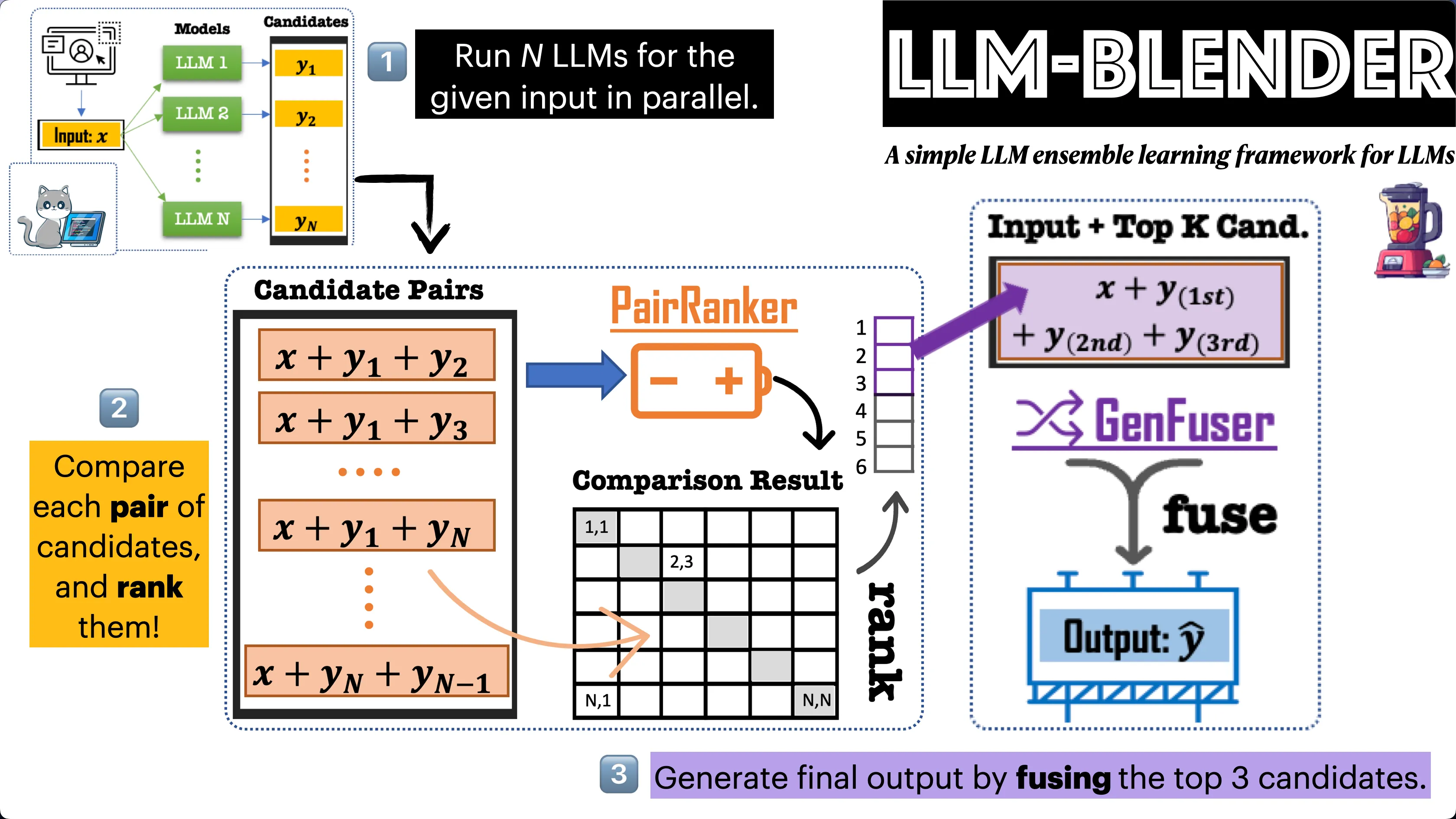

LLM-Blender: A Simple Ensemble Learning Framework for LLMs

With the rise of open-source large language models (LLMs) such as Alpaca, Vicuna, and Falcon, we are witnessing boundary-pushing possibilities in this realm. Certain models demonstrate superior overall performances on leaderboards like AlpacaEval and Chatbot Arena. However, is it reasonable to stick with one top-performing LLM for all user inputs? The answer may not be as straightforward as one might think. In this blog post, we are delighted to introduce our recent paper published in ACL 2023. In this study, we proposed LLM-Blender, a simple two-stage ensemble learning framework. Alongside this, we also introduced a dataset, coined as MixInstruct, to carry out evaluations.

July 19, 2023 | Keywords: LLM, Ensemble Learning, Instruction Tuning,

SwiftSage: Building Agents for Complex Interactive Tasks via Fast & Slow Thinking

Large language models (LLMs) like GPT-4 have revolutionized the field of AI by demonstrating exceptional performance in various reasoning tasks. However, the majority of this research has been limited to static environments, such as solving math problems or answering factoid questions. This raises the question: can LLMs be used for complex interactive tasks in the real, physical world? Imagine having an agent that could help complete everyday embodied tasks; can LLMs accomplish this?

June 21, 2023 | Keywords: Agent, LLM, Dual Process Theory, Text Game, Embodied AI